Why most enterprise AI projects die before production

There's a gap between an impressive AI demo and a system that runs reliably at 2 AM when nobody's watching. We've spent the last decade building software for government agencies, healthcare providers, and financial institutions in Israel. The systems we build handle sensitive data, operate under strict regulatory constraints, and can't afford to hallucinate.

When we started applying AI to these environments, we quickly realized that wrapping a GPT API wasn't going to work. You can't connect a language model to a government healthcare system, cross your fingers, and hope it gives accurate answers. These environments need something fundamentally different.

What we mean by "Agentic AI"

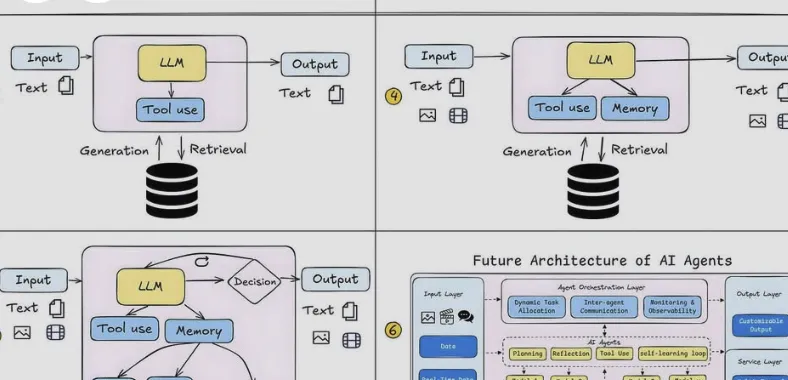

Agentic AI refers to AI systems built as goal-oriented agents that reason through multi-step tasks, interact with existing databases and APIs, and operate within security and compliance boundaries. Compare this to the typical chatbot approach: a single LLM call that generates a response with no context about the organization it's serving.

Our Agentic Framework is the infrastructure we built to make this work at scale. It handles three things that most AI implementations skip:

- Task orchestration — an agent breaks a complex request into steps, executes them in sequence, and adjusts if something fails mid-process.

- Two-way system integration — agents read from and write to existing enterprise systems (CRM, case management, document repositories) through secure API connections.

- Built-in compliance — permission models, audit logs, and regulatory constraints are part of the framework, not afterthoughts.

The framework itself is domain-neutral. We configure it for specific industries, but the core infrastructure handles the hard parts: state management, error recovery, and security.